What happens after launch? A post-launch analysis guide

Feb 9, 2026

5 mins read

Written by Imrana Essa

A launch does not end when the product goes live. The real insights appear once users start interacting, exploring features, and forming opinions.

Post-launch analysis helps you understand these early signals and measure the true impact of your launch using product launch analytics.

By reviewing performance, feedback, and user behavior together, post-launch analysis turns raw results into clear next steps. It helps teams improve quickly and avoid repeating the same mistakes in future launches.

This guide covers what post-launch analysis is, how to run it effectively, and how to apply what you learn within the first 30 days.

What is a post-launch analysis?

A post-launch analysis is the process of evaluating how a product performed after it was released to users. It focuses on real outcomes rather than expectations, using data, feedback, and user behavior to understand what actually happened once the launch was live.

Unlike launch planning or execution, post-launch analysis looks at results over time. With insights from a powerful product analytics tool, teams can assess whether the launch achieved its intended goals, where users faced friction, and which parts of the product or messaging delivered value. This makes it easier to decide what needs improvement, what should be prioritized next, and what should be avoided in future launches.

Post-launch analysis is also important because early signals can be misleading. Initial sign-ups or interest may look promising, but sustained product usage, engagement, and feedback often tell a different story. By reviewing performance after launch, teams can catch issues early, validate assumptions, and turn launch outcomes into actionable learnings instead of one-off results.

Goals of post-launch analysis

The purpose of post-launch analysis is not just to review results, but to guide better decisions moving forward. The key goals include:

- Understand whether the launch met its original objectives

- Identify issues that affected user experience after release

- Measure the real impact of the launch across different user segments

- Prioritize fixes and improvements based on impact and effort

- Capture learnings that can improve future product launches

Power up your SaaS

with perfect product analytics

*No credit card required

How to conduct a post-launch analysis (step-by-step)

A post-launch analysis works best when feedback, behavior, and outcomes are reviewed together. Looking at only one signal often leads to surface-level conclusions, while combining them gives a clearer picture of how the launch actually performed.

Step 1. Collect feedback from every relevant channel

Start by gathering feedback wherever users interact with your product. This usually includes support tickets, onboarding surveys, sales conversations, customer interviews, and public reviews. Each channel reflects a different part of the user experience, which is why relying on just one can be misleading.

At this stage, pairing feedback with actual usage data helps validate what users say against what they do. Tools like Usermaven can help teams connect qualitative feedback with real product interactions instead of reviewing them separately.

Step 2. Review product usage and post-launch behavior

Once feedback is collected, look closely at how users behave after the product goes live. Pay attention to user activation, feature adoption, and where users drop off early in their journey. These signals often reveal friction that testing or internal reviews failed to catch.

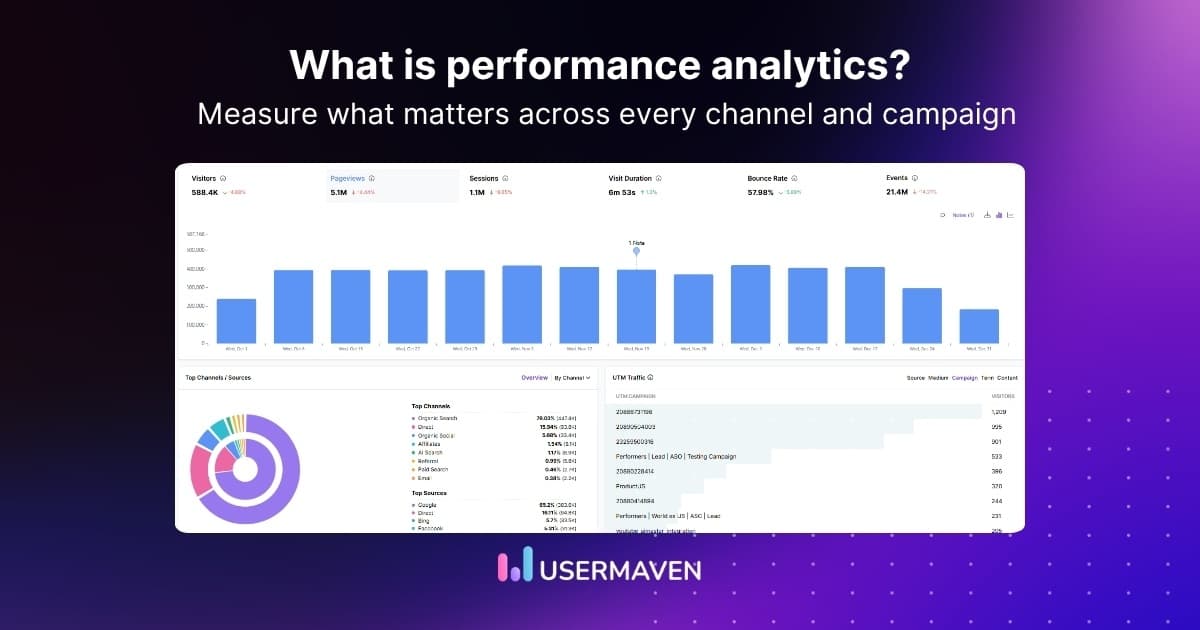

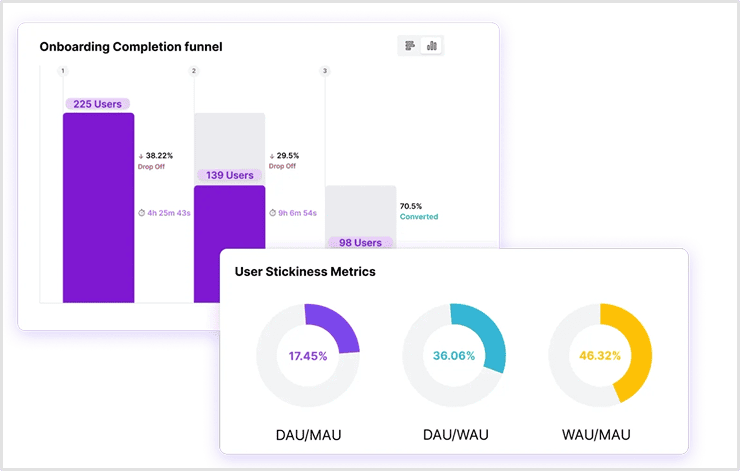

Analyzing post-launch user flows and funnels through a product analytics dashboard can show exactly where users struggle, stall, or abandon key actions. For example, in Usermaven, you can visualize post-launch funnels and see where users abandon flows or fail to activate key features.

Step 3. Focus on outcomes rather than surface activity

High traffic, sign-ups, or engagement can look positive but still hide deeper issues. Post-launch analysis should focus on outcomes such as retention analytics, conversion rate, upgrades, or revenue impact, not just activity volume.

If you are reviewing how users move from first interaction to meaningful actions after launch, including signals of product stickiness, having visibility into both behavior and outcomes makes this much easier. When those signals live together, as they do in Usermaven, teams can quickly see which parts of the launch created lasting value and which ones only generated short-term interest.

Step 4. Segment results to understand who is affected

Not all users experience a launch in the same way. Segmenting results by user type, plan, use case, or acquisition channel often reveals patterns that are invisible in aggregated data.

This step helps teams understand which segments benefited from the launch and which ones faced friction. It also prevents one-size-fits-all decisions that may solve minor issues while ignoring high-impact problems.

Step 5. Prioritize fixes using impact and effort

After identifying post-launch issues, the next step is deciding what to address first. Focus on improvements that create meaningful impact for important user segments while remaining realistic in terms of effort and resources.

Prioritization works best when decisions are guided by post-launch performance data rather than urgency, opinions, or the loudest feedback.

Step 6. Validate improvements and capture learnings

Once changes are made, it’s important to look beyond immediate results and observe how performance evolves over time. Short-term improvements can fade quickly, while meaningful changes tend to show consistent patterns across weeks.

Product trend analysis helps teams track these longer-term shifts in the adoption curve, engagement, or retention after launch, making it easier to confirm whether improvements are lasting or temporary. This closes the loop and helps avoid repeating the same mistakes in future launches.

Questions to ask after a product launch

Once the initial data and feedback are in, the most valuable part of a post-launch analysis is asking the right questions. These questions help teams move beyond metrics and focus on understanding what truly influenced the launch outcome.

Rather than reviewing everything at once, use these questions to guide discussions and uncover patterns that may not be obvious at first glance.

- Who is finding the most value in the product early on?

Identify the users who adopt quickly and engage consistently, as they often indicate whether the product is resonating with the intended audience. - What problems are users actually solving with the product after launch?

Understand how users apply the product in real scenarios and whether those use cases align with the problems it was designed to solve. - Where does the product fall short of user expectations?

Look for gaps between what users expected and what they experienced to uncover friction points that affect satisfaction and adoption. - Which customer segments show the strongest long-term potential?

Evaluate retention, product usage patterns, and feedback to determine which segments are most likely to deliver sustained value over time. - What new opportunities or extensions does early feedback reveal?

Use insights from early users to uncover adjacent features, improvements, or product ideas that were not part of the original launch plan.

These questions help teams turn post-launch data into decisions, ensuring the analysis leads to improvement rather than just reporting.

Post-launch analysis template: 30-day action plan

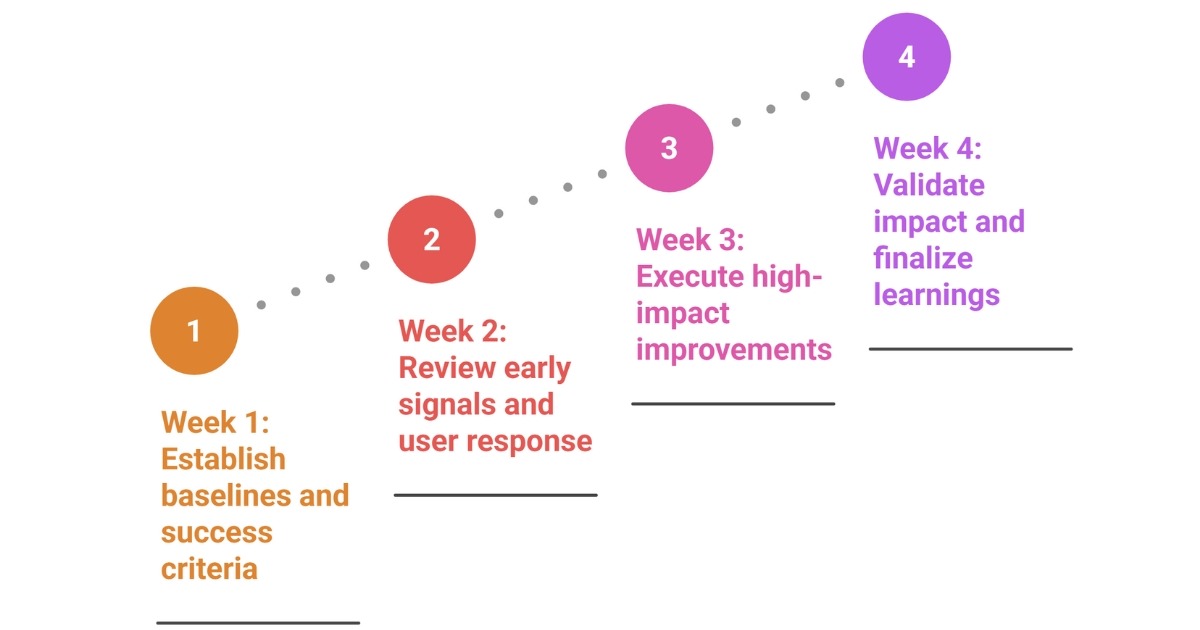

A 30-day post-launch analysis helps teams review performance while feedback and behavior are still fresh. Breaking the process into weekly phases keeps the analysis focused and prevents it from dragging on without clear outcomes.

Week 1: Establish baselines and success criteria

Define what success looks like for this launch now that it is live. Lock baseline metrics for usage, retention, and conversions so changes can be measured accurately later. Align teams on what signals matter most during the post-launch period.

The goal this week is clarity, not conclusions.

Week 2: Review early signals and user response

Analyze early behavior and feedback to understand how users are responding after launch. Look for unexpected patterns, early friction points, or segments behaving differently than planned.

This week is about forming hypotheses, not rushing into fixes.

Week 3: Execute high-impact improvements

Based on the insights gathered, implement a small number of focused changes. These should directly address the most impactful post-launch issues rather than cosmetic improvements.

Keep changes measurable so their impact can be reviewed clearly.

Week 4: Validate impact and finalize learnings

Review post-change performance against the baselines set in week one. Confirm whether outcomes improved and document what influenced those changes.

Wrap up the post-launch analysis by capturing learnings that can guide future launches and decision-making.

Post-launch audit checklist

Use this checklist to quickly audit whether your post-launch analysis is complete and actionable. Each item should be reviewed with evidence, not assumptions.

| Area | What to review | Done |

| Goals | Launch goals are clearly defined | ⬜ |

| Baseline | Baseline metrics set for comparison | ⬜ |

| Outcomes | Retention, conversions, or revenue reviewed | ⬜ |

| Behavior | Post-launch activation and usage reviewed | ⬜ |

| Friction | Key drop-off or friction points identified | ⬜ |

| Feedback | Feedback collected across channels | ⬜ |

| Validation | Feedback aligned with actual usage | ⬜ |

| Segmentation | Results segmented by user type | ⬜ |

| Prioritization | Issues ranked by impact and effort | ⬜ |

| Execution | Improvements implemented and measured | ⬜ |

| Learnings | Key takeaways documented | ⬜ |

| Reuse | Learnings applied to future launches | ⬜ |

Power up your SaaS

with perfect product analytics

*No credit card required

Common post-launch analysis mistakes to avoid

Having the right data is not always enough. Common post-launch mistakes can still lead teams in the wrong direction.

- Judging launch success based only on launch-week traffic or sign-ups

- Treating early interest as proof of long-term product adoption

- Acting on feedback without validating it against actual user behavior

- Giving equal weight to all feedback instead of prioritizing key segments

- Reviewing metrics in isolation without understanding the user context

- Fixing low-impact issues while ignoring high-friction user flows

- Making multiple changes at once without measuring individual impact

- Skipping segmentation and assuming all users experienced the launch the same way

- Failing to set clear baselines before measuring post-launch performance

- Not documenting learnings, causing the same launch mistakes to repeat

Taken together,

A post-launch analysis helps teams understand what really happened once a product went live. By reviewing outcomes, user behavior, and feedback together, it becomes easier to identify what worked, what didn’t, and what should change before the next launch.

To do this effectively, teams need a clear way to connect those signals instead of reviewing them in isolation. Usermaven helps teams make sense of post-launch performance by bringing behavior, outcomes, and segments together in one place. As a leading marketing attribution tool, it shows which channels, actions, and user journeys contributed to meaningful results after launch.

Ready to get clearer answers after your next launch instead of guesswork?

Sign up or book a demo today to get clear answers after every launch and understand what actually drives results.

FAQs

1. What does post-launch analysis mean in simple terms?

Post-launch analysis means reviewing how a product performed after it was released. It focuses on real outcomes like usage, retention, and feedback rather than plans or expectations.

2. How soon should you start post-launch analysis?

Post-launch analysis should begin as soon as users start interacting with the product. Early signals are useful, but the analysis should continue long enough to capture meaningful behavior patterns.

3. How long should a post-launch analysis last?

Most teams run post-launch analysis for 30 days, but the timeline can vary based on product complexity and launch goals. The key is to review both short-term and early long-term signals.

4. What is the difference between post-launch analysis and post-launch audit?

Post-launch analysis focuses on understanding performance and outcomes, while a post-launch audit is a structured check to ensure all key areas were reviewed and nothing was missed.

5. Who should be involved in post-launch analysis?

Post-launch analysis works best when product, marketing, growth, and customer-facing teams contribute insights. This ensures decisions are based on a complete view of product experience and performance.

6. Can post-launch analysis improve future launches?

Yes. Documented insights from post-launch analysis help teams avoid repeating mistakes and improve planning, messaging, and execution for future product launches.

Try for free

Grow your business faster with:

- AI-powered analytics & attribution

- No-code event tracking

- Privacy-friendly setup